Ke Chen, Hao-Wen Dong, Yi Luo, Julian Mcauley, Taylor Berg-Kirkpatrick, Miller Puckette, Shlomo Dubnov

Proceedings of the 23rd International Society for Music Information Retrieval Conference, Dec 2022, Bengaluru, India.

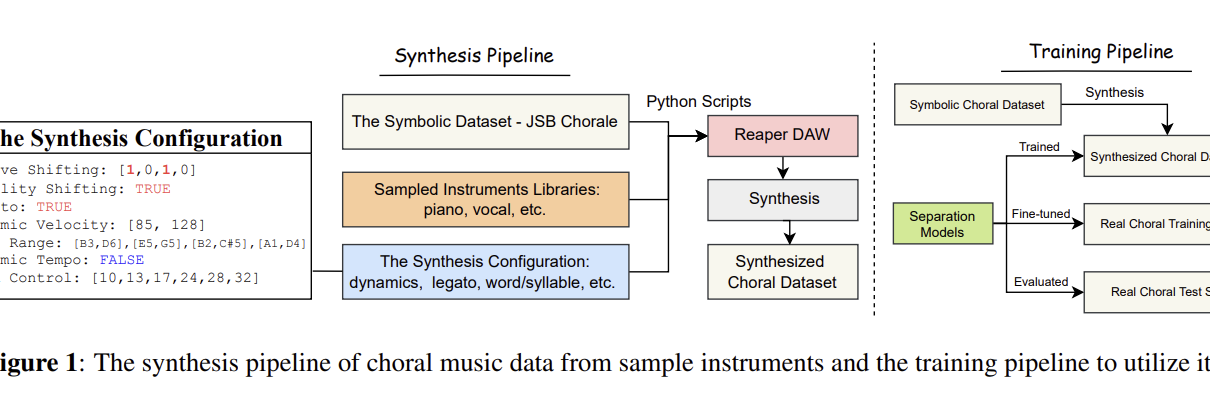

Abstract: Choral music separation refers to the task of extracting tracks of voice parts (e.g., soprano, alto, tenor, and bass) from mixed audio. The lack of datasets has impeded research on this topic as previous work has only been able to train and evaluate models on a few minutes of choral music data due to copyright issues and dataset collection difficulties. In this paper, we investigate the use of synthesized training data for the source separation task on real choral music. We make three contributions: first, we provide an automated pipeline for synthesizing choral music data from sampled instrument plugins within controllable options for instrument expressiveness. This produces an 8.2-hour-long choral music dataset from the JSB Chorales Dataset and one can easily synthesize additional data. Second, we conduct an experiment to evaluate multiple separation models on available choral music separation datasets from previous work. To the best of our knowledge, this is the first experiment to comprehensively evaluate choral music separation. Third, experiments demonstrate that the synthesized choral data is of sufficient quality to improve the model’s performance on real choral music datasets. This provides additional experimental statistics and data support for the choral music separation study.